Strikeout rates through the years

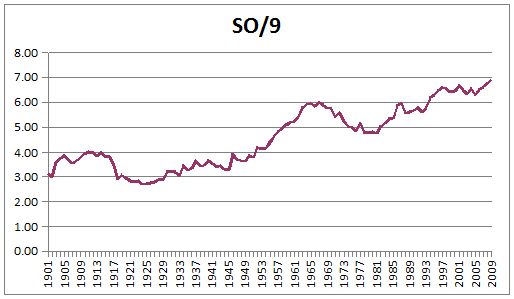

I’ve been thinking about strikeouts. Specifically, I’ve been thinking about pitcher strikeout rates (K/9) and how they have changed over time.

When you imagine a pitcher who blows people away, do you have a specific K/9 in mind? The answer might depend on when the question was asked. Over the past 100+ years, strikeout rates have steadily climbed, with periodic dips here and there:

In the first two decades of the 20th century, rates for all MLB typically hovered in the 3.5-3.7 range. In the ’20s, they slid to around 2.8. They returned to the mid-3.0s until 1952, when a new high of 4.19 was established (breaking the previous mark of 4.00, set in 1911). Since then—well, this will be easier to view as a table:

| Years | Low | High | Avg* |

|---|---|---|---|

| *This isn’t a true average. I’ve simply summed the K/9 for each season and divided by number of seasons (as opposed to summing strikeouts and innings), which gives us a rough enough approximation for big-picture stuff. | |||

| 1901-1909 | 2.98 | 3.87 | 3.55 |

| 1910-1919 | 2.89 | 4.00 | 3.67 |

| 1920-1929 | 2.69 | 2.95 | 2.81 |

| 1930-1939 | 3.04 | 3.63 | 3.32 |

| 1940-1949 | 3.27 | 3.89 | 3.55 |

| 1950-1959 | 3.77 | 5.09 | 4.40 |

| 1960-1969 | 5.18 | 5.99 | 5.70 |

| 1970-1979 | 4.77 | 5.75 | 5.15 |

| 1980-1989 | 4.75 | 5.96 | 5.34 |

| 1990-1999 | 5.59 | 6.61 | 6.14 |

| 2000-2009 | 6.30 | 6.91 | 6.56 |

A few things caught my eye:

- The lowest aggregate strikeout rates in the ’60s (5.18) were higher than the highest in the ’50s (5.09)

- The highest rates in the ’70s and ’80s (5.96) fall short of the lowest rates of the 2000s (6.30)

- What would have been an exceptionally high rate in the ’90s (6.61) is now slightly above average (6.56)

I won’t get into possible reasons for the steady climb (e.g., less emphasis on batters making contact, changes in pitcher usage leading to more fresh arms late in games), enlightening though such discussion might be. I’m interested now in the comparison of rates across time and our perceptions of various pitchers based on those rates.

For example, look at Dwight Gooden’s 1985 season. He completely dominated the opposition that year and easily won the Cy Young Award, but his K/9 rate was 8.72. Not so great, right? Well, it was good enough for second in the National League, whose K/9 checked in at 5.50. In 2009, however, Gooden’s totals would have placed him 10th in a league that averaged 7.03 K/9.

Tools like ERA+ and OPS+ adjust for context. Wouldn’t it be useful to apply a similar adjustment to other metrics such as K/9? Without getting into park effects (a discussion for some other day), what if we made a simple adjustment to account for league norms? The basic formula would be simple: (player K/9)/(league K/9)*100. Numbers above 100 are above league average, numbers below 100 are, yep, below average.

In Gooden’s case, we get 8.72/5.50*100 = 159. How would that play in 2009? Well, Tim Lincecum led the NL with 10.43 K/9. Running his numbers, we get 10.43/7.03*100 = 148.

So, Lincecum had a much higher strikeout rate (by 1.71) in 2009 than Gooden did in 1985. But taking context into account, we see that the entire league had a much higher strikeout rate (by 1.53) in 2009 than in 1985. In today’s environment, Gooden’s 8.72 K/9 from 1985 would translate to 11.15 (i.e., 8.72/5.50*7.03), which looks more impressive and gives us a more proper appreciation of his accomplishments.

Express numbers in big units

We could evaluate multiple seasons or even entire careers in a similar manner. For example, Nolan Ryan’s career K/9 checks in at a spiffy 9.55. That’s nice, but it doesn’t compare well with Randy Johnson’s 10.61.

Okay, but how much of this is due to Ryan and Johnson, and how much is due to their respective environments? Check out the top 10 strikeout rates (min. 162 IP) for each pitcher:

| Ryan | Johnson | ||

|---|---|---|---|

| Year | K/9 | Year | K/9 |

| 1987 | 11.48 | 2001 | 13.41 |

| 1989 | 11.32 | 2000 | 12.56 |

| 1973 | 10.57 | 1995 | 12.35 |

| 1991 | 10.56 | 1997 | 12.30 |

| 1972 | 10.43 | 1998 | 12.12 |

| 1976 | 10.35 | 1999 | 12.06 |

| 1977 | 10.26 | 2002 | 11.56 |

| 1990 | 10.24 | 1993 | 10.86 |

| 1978 | 9.97 | 1994 | 10.67 |

| 1974 | 9.93 | 2004 | 10.62 |

There is no comparison, at least not in terms of raw numbers. But when we introduce context, the picture changes. Here’s that same list, with a few extra bits of information added to the mix:

| Ryan | Johnson | ||||||

|---|---|---|---|---|---|---|---|

| Year | K/9 | Lg K/9 | Adj K/9 | Year | K/9 | Lg K/9 | Adj K/9 |

| *Johnson split ’98 in the AL and NL. For the sake of simplicity, I used MLB average in this instance; a more accurate method would be to prorate his time spent in each league, but again, this will suffice for our purposes. | |||||||

| 1987 | 11.48 | 6.00 | 191 | 2001 | 13.41 | 6.92 | 194 |

| 1989 | 11.32 | 5.43 | 208 | 2000 | 12.56 | 6.68 | 188 |

| 1973 | 10.57 | 5.07 | 209 | 1995 | 12.35 | 6.00 | 206 |

| 1991 | 10.56 | 5.71 | 185 | 1997 | 12.30 | 6.20 | 198 |

| 1972 | 10.43 | 5.48 | 190 | 1998 | 12.12 | 6.56 | 185* |

| 1976 | 10.35 | 4.73 | 219 | 1999 | 12.06 | 6.64 | 182 |

| 1977 | 10.26 | 4.97 | 207 | 2002 | 11.56 | 6.71 | 172 |

| 1990 | 10.24 | 5.60 | 183 | 1993 | 10.86 | 5.71 | 190 |

| 1978 | 9.97 | 4.49 | 222 | 1994 | 10.67 | 6.03 | 177 |

| 1974 | 9.93 | 4.89 | 203 | 2004 | 10.62 | 6.69 | 159 |

Now let’s look only at Adj K/9, and order Ryan’s and Johnson’s top 10 seasons according to this metric:

| Ryan | Johnson | ||

|---|---|---|---|

| Year | Adj K/9 | Year | Adj K/9 |

| 1978 | 222 | 1995 | 206 |

| 1976 | 219 | 1997 | 198 |

| 1973 | 209 | 2001 | 194 |

| 1989 | 208 | 1993 | 190 |

| 1977 | 207 | 2000 | 188 |

| 1974 | 203 | 1998 | 185 |

| 1987 | 191 | 1999 | 182 |

| 1972 | 190 | 1994 | 177 |

| 1991 | 185 | 2002 | 172 |

| 1990 | 183 | 2004 | 159 |

This is no knock on Johnson, who racked up some terrific strikeout numbers during those years, but isn’t it interesting how something that seems so clear when viewed one way can become less certain when examined through a different lens? Perhaps most telling is the comparison of Ryan’s ’78 and Johnson’s ’01:

| Ryan | Johnson | ||||

|---|---|---|---|---|---|

| K/9 | Lg K/9 | Adj K/9 | K/9 | Lg K/9 | Adj K/9 |

| 9.97 | 4.49 | 222 | 13.41 | 6.92 | 194 |

Johnson’s strikeout rate is nearly 35 percent higher than that of Ryan, but he’s pitching in an environment whose rate is 54 percent higher than that of Ryan’s.

So what?

It’s disturbingly easy to fall into the trap of believing that the way things are now is the only way they ever have been or ever will be. It’s easy because change often is more subtle than we expect, slowly worming its way into our consciousness while we’re busy going about the business of everyday life.

When did teams start using 11- and 12-man pitching staffs? When did umpires start throwing balls out of play after they’d bounced in the dirt? Who knows. Things evolve and—unless they’re obvious and call attention to themselves, like the designated hitter experiment or enforcement of the balk rule—often go unnoticed until one day, like David Byrne, you may ask yourself, “How did I get here?”

For this reason, it’s good to look back at Gooden’s ’85 or Ryan’s ’78 and recognize that even though their strikeout rates might not be mind-blowing by current standards, they are no less impressive. Stick this year’s Lincecum in the 1978 AL and he’d be below 7 K/9 (more precisely, 6.66, good for fourth in the AL behind Ryan, Ron Guidry, and—brace yourself—Ken Kravec); that’s no knock on Lincecum or his abilities, just a reflection of the difference 30 years can make.

I’m not really stating anything new here (Derek Jacques discussed making adjustments to strikeout rates a while back at Baseball Prospectus, and I’m sure others have entertained similar ideas), but every now and then I think about these things. I hear people cite this statistic or that statistic as definitive proof of something or other in all possible universes, and it inspires me to hop onto my humble soapbox and invoke the names of long-forgotten Kravecs.

Plus I get a kick out of considering alternate realities. It is fun to contemplate how Johnson might have performed in Ryan’s environment, or what Gooden’s 1985 performance might have looked like had it occurred in 2009.

There could be practical applications of this as well, perhaps to provide additional context in the evaluation of minor-league players. For example, how does Adam Wainwright’s 10.06 K/9 at Macon in 2001 look in light of the South Atlantic League’s 8.03 mark that year? How does this differ from if he’d posted the same strikeout rate in the International League, which checked in at 6.93 K/9?

There are more questions like these that we might wish to answer. Perhaps you have some of your own.

References & Resources

Baseball-Reference, of course.

Great stuff, Geoff. I share your fascination with this issue … several years back I explored it too:

http://www.hardballtimes.com/main/article/strike-zone-dominance-in-context-dazzy-and-pedro/

How about K/Batters Faced?

I’d wager there was a similar difference in BF/9 over time as well

@Swami: Excellent point. It might be instructive to compare SO/9 with other metrics from each era, e.g., pitchers used per game, ISO, PA/HR. I haven’t studied the issue closely, but it seems that the risk of striking out is more acceptable to hitters (and the game itself) now than it was 30 years ago.

Put another way, I’m not sure Mark Reynolds (198 SO/600 PA) would have been allowed to survive with his approach back when Dave Kingman (147 SO/600 PA) was playing. The fact that there is now a place for guys like Reynolds (and Adam Dunn, Ryan Howard, etc.) that produce despite not making much contact surely plays a role in the differing strikeout rates.

@Steve: Oh, wow. I dug around for stuff on this issue but somehow missed your old article. In a way, I’m glad because I would have spent all my time reading that and blown off my own article. Great read!

One factor that I didn’t see addressed is that of innings pitched by starter. It seems reasonable to assume that if Ryan were pitching in Johnson’s era and had his innings limited, his strikeout rate per game would go up but his total strikeouts would go down. Vice versa for Johnson. Similar reasoning for Lincecum, Gooden, et al.

I’ve always found it curious that people think that pitcher usage or pitcher’s pitching more for strikeouts is the reason for the increase. It seems rather obvious that there has been a change in approach by the hitter.

#1-Sabermetrics has pointed out that strikeouts were no big deal and baseball has paid attention.

#2-The game is now more slanted in favor of the hitter so it pays to swing from the heels and go for the HR. Fenway Park is now a park which reduces HR on average when it was once one of the greatest HR ballparks in the league.

@HH: A change in hitting approach for the reasons you mention almost certainly is a factor, although we’d need to confirm what “seems obvious” by testing the hypothesis.

I’m also not sure that this is an either/or question. In other words, even if change in hitting approach proves to be a primary driver, it may well be that change in pitcher usage is a secondary driver (or that pitcher usage is somehow related to hitting approach).

Interesting things to ponder. Thanks!

Actually, in this case you could argue that the adjustment over-adjusts for context. Some of the reasons that might have contributed to the change (changes in pitcher usage, more team emphasis on strikeout pitchers) may affect totals but not the individual performance.

In other words, a 60% increase in strikeout rates between 1978 and 2001 may have been contributed by (say) 40% difference in factors that affected individual numbers (e.g. batter approach, umpire strikezones) and 20% by factors that affected only team numbers (such as different pitcher usage or more strikeout pitchers on each team). This would mean that Ryan’s 10 K/9 would be equivalent to 14 K/9 in 2001 rather than 16 K/9.

Putting it yet another way, the ratio of peak to average K/9 in the league may be affected by team tendencies such as greater emphasis on strikeouts. Looking at K distribution patterns (standard deviation) within a team can validate or invalidate this hypothesis.

Very interesting article, thanks! Just discussing how to improve the stat analysis even further.

Very cool. But I do have one quibble. There is a problem with ERA+ at the extremes, due to the fact that your ERA can only get so low. Likewise, K/9 can only get so high (27). Granted, even today’s K rates aren’t anywhere near 27. But it should still be harder to post a higher K rate now then it was in the past, when measured by a simple percentage. A distribution with standard deviations might be a better, if much harder, way of comparing. You’d also be able to tell how problematic the percentage is by seeing how close the curve was to normal.

I’m not certain, but I’m betting Lincecum would compare more favorably to Mr. Kravec using my suggested approach, especially if you could also take Swami’s point into account.